Table of Contents

Building software used to be about logic, loops, and predictable outcomes. You wrote a command, and the machine followed it. However, the shift toward AI software development has rewritten the rulebook.

Nowadays, developers aren’t just writing code; they are cultivating systems that learn, adapt, and occasionally hallucinate. This transition from deterministic programming to probabilistic learning has turned the development lifecycle into a high-stakes balancing act of data science and traditional engineering.

The reality is that AI software development challenges run much deeper than standard debugging. Unlike traditional apps, AI systems are hungry for massive, high-quality datasets and require specialized infrastructure that can handle intense computational loads.

If you don’t address these challenges of artificial intelligence implementation during the architectural phase, you risk building a “black box” that is impossible to maintain, audit, or scale. Success in this field requires a shift in mindset: you must move from simply writing instructions to managing the complex, often unpredictable behavior of evolving models.

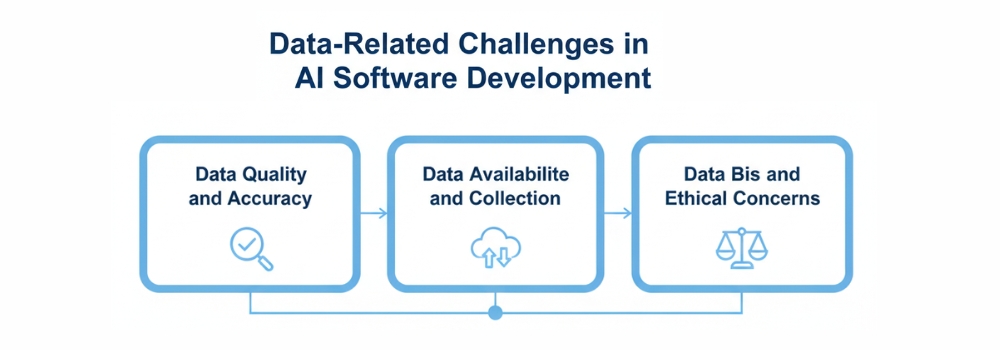

Data-Related Challenges in AI Software Development

Why are so many organizations watching their AI investments vanish into a “black hole” of failed pilots? According to multiple industry surveys, many organizations struggle to scale AI projects beyond proof-of-concept due to gaps in governance, infrastructure, and talent.

The culprit isn’t usually the algorithm; it’s the foundation. In AI software development, your results are only as intelligent as the data you provide. If you aren’t addressing the specific data-related challenges of artificial intelligence, you aren’t building a solution; you’re building a liability.

1. Data Quality and Accuracy: The “Silent Failure” Risk

In traditional software, a bug causes a crash. In AI software development, poor data quality causes a “silent failure,” a model that appears to work perfectly but provides confidently wrong or hallucinatory outputs.

- The Hallucination Trap: As we’ve seen in 2026, even enterprise-grade agents struggle with “data poisoning” or “synthetic feedback loops,” where AI-generated data is accidentally fed back into training sets, degrading the model’s logic over time.

- Accuracy vs. Precision: In high-stakes fields like finance or healthcare, the tolerance for error is zero. Flawed datasets increase the risk of “model drift,” where an AI’s accuracy slowly erodes as real-world conditions diverge from its training data.

- The Integrity Differentiator: The industry has shifted its focus from the volume of data to the integrity of data. Success today requires rigorous “freshness checks” and automated schema-change alerts to catch errors before they infect the model.

2. Data Availability and Collection

The most persistent AI software development problem is the gap between the massive data requirements of modern models and the fragmented reality of corporate storage.

- The Silo Standoff: Critical data is often trapped in legacy systems or “black box” departments that don’t share information. Breaking these silos is a prerequisite for any AI software development project.

- Edge Case Scarcity: AI needs to see what rarely happens to be robust. Collecting enough high-quality, verified data for these “edge cases” remains a massive hurdle, leading many firms to pivot toward synthetic data, artificially created information that mimics real patterns without the privacy risks of raw data.

- Manual Labeling Delays: While AI can process data at lightning speed, the human-in-the-loop process of labeling that data remains slow, expensive, and prone to error, often becoming the primary bottleneck for project timelines.

3. Data Bias and Ethical Concerns

We have entered an era of “Radical Transparency.” With the phased enforcement of the EU AI Act beginning in 2025 and evolving AI governance frameworks emerging globally, addressing the challenges of artificial intelligence regarding ethics is now a legal mandate, not just a corporate social responsibility.

- Embedded Prejudices: AI models are mirrors. If your historical data reflects past hiring biases or demographic imbalances, your AI will not just repeat those mistakes; it will automate them at scale.

- The Proxy Problem: Even if you remove sensitive markers like “gender” or “race,” AI can often identify “proxies” like a postcode or a specific extracurricular activity that allow it to discriminate unintentionally.

- Explainable AI (XAI): The “Black Box” era is ending. Stakeholders now demand to know why an AI made a specific decision. Implementing XAI tools is the only way to build the trust necessary for widespread adoption.

Technical Challenges in AI Software Development

Why do so many AI initiatives look brilliant in a demo but crumble the moment they face a real production workload? In 2026, the industry has realized that “making it work” and “making it work at scale” are two entirely different problems.and this gap represents one of the biggest challenges to AI adoption for many organizations.

If you’ve ever wondered why your engineering team is debating model parameters for weeks or why your cloud bill spiked 300% after a minor update, you’ve hit the wall of technical challenges in AI software development. Moving beyond the hype requires solving three major structural hurdles.

1. Model Selection and Algorithm Complexity

Are you choosing the most powerful model available, or the one that actually fits your use case? In 2026, “bigger” is no longer synonymous with “better.”

The sheer variety of LLMs, SLMs (Small Language Models), and domain-specific architectures has turned model selection into a high-stakes strategic decision.

- The Overkill Trap: Using a massive, multi-billion parameter model for a simple classification task is like using a rocket ship to go to the grocery store. It’s expensive, slow, and unnecessarily complex.

- Context Window Management: With models now supporting millions of tokens in context, engineers face a new AI software development problem: “context poisoning.” Too much information can lead the AI to lose focus on the core instruction.

- The Black Box Dilemma: As algorithms grow more complex, they become harder to debug. Teams are now forced to implement “Explainable AI” (XAI) frameworks just to understand why a model made a specific prediction, adding another layer of complexity to the development stack.

2. Scalability and Performance Issues

Can your AI handle 10,000 concurrent users without the latency making the app unusable? Scalability and performance issues are often where the most promising AI software development challenges reside.

- The Latency War: In 2026, users expect instant responses. However, deep learning models are computationally “heavy.” Balancing model accuracy with “Inference Speed” is a constant tug-of-war.

- Resource Orchestration: AI requires specialized hardware (GPUs and TPUs). Managing these resources effectively using techniques like quantization to shrink models or “Model Sharding” to spread the load is now a core requirement for any AI software development project.

- The Cost of Scaling: Cloud compute cost of AI agent development can spiral out of control. Without strict “Rate Limiting” and “Token Budgeting,” a successful product can quickly become a financial liability.

3. Integration with Existing Systems

One of the Powerful Benefits Of AI In Software Development is its ability to enhance and modernize existing systems. However, integrating modern AI with legacy infrastructure remains a significant challenge. How do you plug a 2026 AI into a 2010 database? This “Brownfield” development problem is one of the most common obstacles organizations face when adopting AI in enterprise environments.

- Legacy Architecture Friction: Most existing systems were built for “Batch Processing,” but AI thrives on “Real-Time Data Streams.” Forcing these two worlds together often requires building complex “Middle-Ware” or “Connectors.”

- API Instability: Many AI solutions rely on external APIs. If the model provider changes their API version or adjusts their weights, it can break your entire downstream workflow.

- Security & Data Silos: Integrating AI often requires breaking down internal data silos. However, doing so without violating privacy laws or exposing sensitive IP is a significant hurdle that requires “Agentic Legacy Modernization” using AI itself to bridge the gap between old and new systems.

Infrastructure and Resource Challenges

Is your current infrastructure ready for the heavy lifting that AI demands? Transitioning from the traditional application development process to AI software development isn’t just a software update; it’s a total hardware overhaul.

Many organizations find that their existing servers are simply not built for the non-linear, high-intensity processing required to keep a modern model running smoothly.

High Computing Power Requirements

The most immediate AI software development problem is the hunger for specialized computing power. Unlike standard apps that run on CPUs, AI thrives on GPUs and TPUs capable of performing millions of simultaneous calculations.

- The GPU Scarcity: In 2026, securing reliable, high-performance compute cycles remains a logistical hurdle, often leading to fluctuating costs and deployment delays.

- Energy Consumption: Beyond the hardware itself, the electricity required to train and run large-scale models is a significant operational expense that can quickly erode a project’s ROI if not managed through “model quantization” and efficient architecture.

Storage and Data Management

If compute power is the engine, data is the fuel, and you need a massive tank to store it. Managing the sheer volume of information required for AI software development challenges even the most robust data centers.

- Latency vs. Volume: You don’t just need to store petabytes of data; you need to access it at lightning speed. Traditional “Cold Storage” won’t work for real-time training, requiring expensive “High-IOPS” (Input/Output Operations Per Second) storage solutions.

- Data Lifecycle Complexity: From raw collection to cleaned datasets and versioned model weights, the “Data Footprint” of an AI project expands exponentially, requiring automated tiered storage strategies to keep costs under control.

Talent and Skill Gaps in AI Development

Building an AI solution requires a specialized workforce that often feels non-existent. While there are millions of traditional software engineers, the pool of professionals who can architect, fine-tune, and secure an AI model is remarkably shallow.

This shortage is the primary reason many companies find themselves stuck in prolonged pilot phases,” where promising demos never quite make it to full-scale production.

Shortage of Skilled AI Professionals

The demand for high-level AI talent has drastically outpaced the supply provided by traditional education. Companies are no longer just looking for people who can write code; they need experts in “LLMOps,” “Agentic Orchestration,” and specialized model optimization.

This scarcity has led to a highly competitive market where securing a single senior AI architect can be as difficult and expensive as hiring an entire team of standard web developers.

Cross-Disciplinary Knowledge Requirements

AI development isn’t just a technical task; it’s a cross-disciplinary challenge that requires engineers to think like domain experts and ethicists. For instance, a developer building an AI for the legal or medical field must understand complex regulatory frameworks and industry-specific nuances to ensure the model’s output is actually useful.

Without this marriage of technical skill and industry knowledge, businesses risk deploying models that are technically sound but practically irrelevant or even legally non-compliant.

Testing, Validation, and Maintenance Challenges

How do you test a system that doesn’t follow a fixed set of rules? In traditional software, you provide an input and expect a specific, pre-defined output. AI flips this script.

Because AI models are probabilistic rather than deterministic, testing becomes a moving target. You aren’t just looking for “broken” code; you are looking for subtle shifts in logic and accuracy that can’t always be caught with a simple unit test.

Difficulty in Testing AI Models

The most significant AI software development problem in quality assurance is the lack of “ground truth.” Since an AI can generate thousands of unique responses, creating a comprehensive test suite is nearly impossible.

- The Non-Deterministic Barrier: You can run the same test twice and get two slightly different results. This makes “flaky tests” a common headache, requiring teams to shift from binary pass/fail grades to statistical confidence scores.

- Explainability and the “Black Box”: When an AI fails, it rarely leaves a trail of breadcrumbs. Validating why a model reached a wrong conclusion requires specialized “Explainable AI” (XAI) tools that can deconstruct the neural pathways of a decision.

Model Drift and Continuous Learning

Unlike traditional software, which remains static until you update it, an AI model begins to “decay” the moment it is deployed. This phenomenon, known as Model Drift, occurs when the real-world data starts to diverge from the data the model was originally trained on.

- The Silent Decay: Your code might be running perfectly, but if user behavior changes or a new market trend emerges, your AI’s predictions will slowly lose their value. This is a “silent failure” that standard monitoring tools often miss.

- The Continuous Learning Loop: To combat drift, you can’t just set it and forget it. Maintenance in the AI world requires a “Continuous Learning Loop,” where fresh production data is constantly fed back into the system to retrain and realign the model.

This creates a permanent maintenance cycle that is far more resource-intensive than traditional bug-fixing.

Ethical, Legal, and Compliance Challenges

As AI systems move from experimental tools to core business drivers, they are facing a new level of scrutiny from both the public and global regulators. It is no longer enough for a model to be fast or accurate; it must also be justifiable.

For many organizations, the biggest hurdle in AI software development isn’t the technology itself, but the complex web of ethics and law that governs how that technology interacts with human lives.

Explainability and Transparency

The era of the “Black Box” is officially over. Stakeholders and customers are increasingly demanding to know exactly how an AI reached a specific conclusion, especially in sensitive areas like lending, hiring, or healthcare.

This demand for Explainability and Transparency creates a massive technical challenge, as the most powerful neural networks are often the hardest to interpret. Developers are now tasked with building “interpretable layers” into their systems to ensure that every automated decision can be audited, explained, and defended in plain language.

Regulatory and Compliance Issues

Navigating the legal landscape has become a primary AI software development problem as governments worldwide introduce strict frameworks to govern machine learning.

From the comprehensive requirements of the EU AI Act to evolving data privacy mandates, businesses must ensure their systems are compliant by design rather than as an afterthought.

Failing to meet these standards doesn’t just result in technical debt; it carries the risk of massive fines and permanent damage to a company’s reputation. Staying ahead requires a proactive approach to “Compliance-as-Code,” where legal boundaries are baked directly into the development lifecycle.

Cost and Time Constraints

While the promise of AI is often framed as a way to save money, the reality of the development phase can be a significant financial shock. Unlike traditional software, where costs are relatively predictable once the requirements are set, AI projects involve a high level of experimentation and resource consumption.

Organizations frequently find that the “sticker price” of an AI pilot is just the tip of the iceberg, with long-term operational costs often exceeding initial estimates by several hundred percent.

High Development and Deployment Costs

The financial burden of AI starts with the specialized hardware and talent required to bring a model to life. High-performance GPUs are not only expensive to purchase but also costly to rent through cloud providers, especially as global demand continues to rise.

Beyond hardware, the specialized expertise needed to manage these systems commands premium salaries, often making the human capital the most expensive part of the budget.

Additionally, once a model is deployed, the ongoing costs of “inference,” the energy and compute power used every time the AI answers a query, can quickly escalate into a massive recurring expenditure that requires strict cost-governance to remain sustainable.

Longer Development Timelines

One of the most common misconceptions is that AI can be “plugged in” overnight. In reality, the development lifecycle is often significantly longer than traditional software due to the intensive “Data-First” requirements.

Engineering teams must spend months sourcing, cleaning, and labeling data before the first round of training even begins. Furthermore, the iterative nature of model training, where a system must be tested, refined, and retrained multiple times to reach acceptable accuracy, means that timelines are often measured in months or even years rather than weeks.

This unpredictability makes it difficult for businesses to align AI launches with quarterly goals, requiring a more patient and long-term strategic approach.

Security Challenges in AI Software Development

Could your most sophisticated AI model be tricked by a single pixel or a hidden sentence in a text file? As we’ve seen throughout early 2026, the shift toward “Agentic AI,” where models have the power to execute code and access databases, has turned the traditional security landscape upside down.

Protecting these systems is no longer just about firewalls and encryption; it’s about defending the very logic of the machine.

Data Privacy Risks

The race for more capable models has created a “Data Minimization” crisis that many firms are struggling to resolve. In an environment where personal data is ingested at a massive scale from customer transcripts to biometric signals, the risk of “Privacy Leakage” is a constant threat.

New operational rules under frameworks like the DPDP and the EU AI Act now demand that privacy be built into the core architecture of the system.

Failing to implement “Privacy-by-Design” doesn’t just invite a breach; it can lead to massive penalties and a permanent loss of digital trust.

Adversarial Attacks on AI Models

Perhaps the most alarming development in 2026 is the industrialization of adversarial attacks. Unlike a standard hack, these attacks exploit the mathematical vulnerabilities of a model.

- Indirect Prompt Injection: A single malicious instruction hidden in a retrieved document can hijack an LLM’s behavior, leading it to exfiltrate data or perform unauthorized actions.

- Data Poisoning: Subtle distortions fed into the training pipeline can “teach” a model to misclassify threats or create hidden backdoors that attackers can trigger months after deployment.

- Adversarial Distillation: Competitors and bad actors are increasingly using automated scripts to “query and copy” proprietary model logic, essentially stealing intellectual property without ever touching a company’s server code.

How to Overcome AI Development Challenges

The conversation around AI is shifting from “What can it do?” to “How do we make it last?” As we move through 2026, the businesses finding the most success are those that have stopped treating AI as a standalone experiment and started treating it as a core operational discipline.

Overcoming the inherent hurdles of this technology requires a move away from manual, “one-off” projects toward a structured, automated, and ethical framework.

Best Practices for Reducing AI Risks

Reducing risk in AI development starts with moving beyond the “black box” approach. High-performing teams are now adopting Explainable AI (XAI) frameworks to ensure that every output can be audited and understood by human stakeholders.

By implementing rigorous data governance, including regular bias audits and automated quality checks, organizations can catch “silent failures” before they impact the end-user.

Additionally, the use of Transfer Learning and Synthetic Data is becoming a standard practice to mitigate data scarcity and protect user privacy, allowing for robust model training without the legal liabilities of raw data ingestion.

The Role of MLOps and Automation

If you want to scale AI without exploding your budget or your timeline, MLOps (Machine Learning Operations) is no longer optional. MLOps brings the discipline of DevOps to the world of AI, automating the most tedious parts of the lifecycle from data cleaning and model versioning to deployment and real-time monitoring.

By using automated retraining pipelines, businesses can combat “Model Drift” instantly, ensuring that the AI adapts to new data patterns without manual intervention.

This hyper-automation not only reduces human error but also allows small teams to manage thousands of models simultaneously, turning a technical bottleneck into a scalable engine for growth.

Importance of Responsible AI Development

In the 2026 regulatory environment, Responsible AI is your greatest strategic advantage. Trust has become the primary currency of the digital economy; customers are only willing to share their data with systems they believe are fair and transparent.

Building with a “Responsible-by-Design” mindset focusing on fairness, accountability, and environmental sustainability ensures that your innovation doesn’t invite a sudden legal shutdown or a reputational crisis.

Where to Get Reliable Software Development Consultation

Finding a partner who can bridge the gap between complex algorithms and commercial viability is the final, most crucial step in your digital transformation. To navigate the high-stakes world of machine learning without falling into the “80% failure” trap, you need more than just a vendor; you need a strategic advisor who understands the intersection of data integrity and scalable architecture. Hudasoft stands out in this landscape as a premier choice, offering specialized AI services that prioritize transparency, security, and measurable ROI to ensure your vision successfully transitions from a laboratory experiment to a market-leading product.

Conclusion

Ultimately, the path to a successful AI deployment is paved with more than just high-performance code; it is built on a foundation of strategic foresight and rigorous data discipline. While the challenges of artificial intelligence, from technical “model drift” to complex ethical and legal hurdles, can seem daunting, they are far from insurmountable for those who prioritize a structured, “data-first” approach.

By moving away from isolated experiments and toward a culture of automated MLOps and transparent governance, you transform these technical roadblocks into a sustainable competitive edge. Success in this field doesn’t belong to those who rush to follow the hype, but to the leaders who plan for the long term, ensuring their AI systems are as resilient and ethical as they are intelligent.

FAQS

What is a challenge of using AI in software development?

A major challenge is non-determinism, meaning the AI can produce different outputs for the same input. This makes traditional testing difficult because developers cannot rely on fixed “pass/fail” results, requiring a shift toward statistical validation and constant human oversight to catch potential “hallucinations.”

What problems do AI developers currently face?

Developers are primarily struggling with Model Drift and data quality. Even a perfectly built model will lose accuracy as real-world trends change, forcing teams to build expensive, automated pipelines to constantly clean data and retrain systems to keep them relevant and reliable.

What is the major problem in AI development?

The “Black Box” nature of complex algorithms remains the biggest hurdle. Because it is difficult to explain exactly how a model reaches a specific conclusion, businesses face massive transparency and trust issues, especially in regulated industries where every automated decision must be auditable and justifiable.

What are the risks of AI in software development?

The most pressing risks are security vulnerabilities and intellectual property leaks. AI tools can inadvertently suggest insecure code or expose sensitive training data through “privacy leakage,” while adversarial attacks like prompt injection can trick the system into bypassing its own safety guardrails.

اترك تعليقاً